Data quality is crucial for any organization. With poor quality data, teams across the company end up making decisions based on misleading or incomplete information. This wastes time and resources that could have been better utilized elsewhere. Having the right data quality tools and processes in place helps identify errors, inconsistencies, inaccuracies, and incompleteness in data sets. This enables organizations to improve overall data health and derive greater value from analytics and business insights.

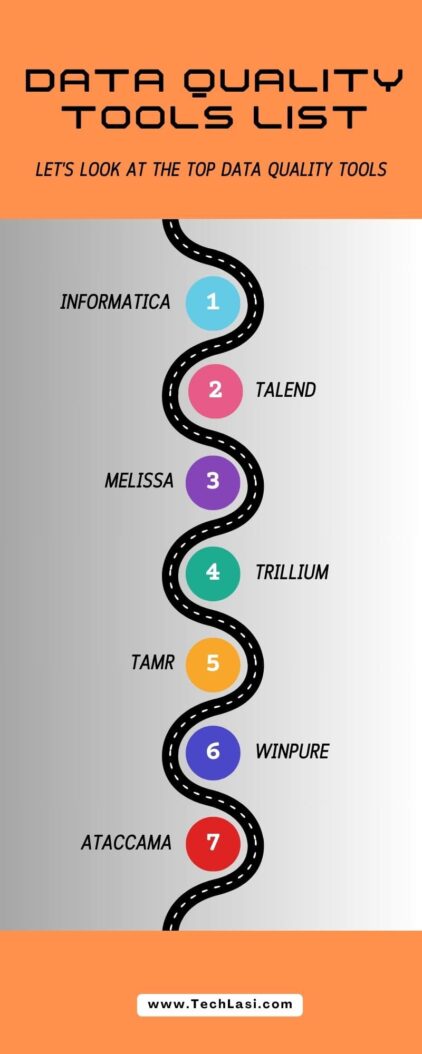

Let’s look at the top data quality tools available:

Why Is Data Quality Important?

High-quality data has a tremendous impact across the enterprise. Here are some key reasons why organizations must prioritize data quality:

- Better decision making: With accurate, complete data, business leaders can make data driven decisions with confidence. There is less risk in strategy and planning when the underlying data can be trusted.

- Higher productivity: When data issues are minimized through quality checks, employees waste less time tracking down and fixing bad data. They can focus on core responsibilities.

- Improved customer experiences: With data quality tools, customer information remains up-to-date across systems. Support and service teams have what they need to quickly resolve issues.

- Compliance: Many regulations around data handling require organizations to ensure a base level of data quality. Tools to manage quality reduce compliance risk.

Core Capabilities Of Data Quality Tools

As we review top data quality solutions, you’ll notice they share some common capabilities:

- Data profiling – Scans data to detect anomalies, inconsistencies, missing values, and integrity constraints. This provides visibility into overall quality.

- Data monitoring – Tracks data quality KPIs on an ongoing basis to quickly catch new issues as they emerge.

- Data parsing and standardization – Normalizes data values into consistent formats. For example, mapping different date formats into a single standard.

- Data enrichment – Enhances data by appending related information from other sources. Often uses reference data.

- Data matching – Identifies duplicate records across or within datasets through likelihood scoring. Facilitates removal of duplicates.

- Data governance – Provides oversight into data quality roles, policies, processes, issue tracking and workflows.

Top 14 Data Quality Solutions

Based on analyst reviews and customer satisfaction, below are 14 of the top data quality tools available today:

1. Informatica

Informatica offers a complete end-to-end data management platform. But a major capability is the Informatica Intelligent Data Quality and Governance portfolio including products for data quality, data masking, data parser, and data governance.

Key Features:

- Broad connectivity to source systems

- Machine learning algorithms to automate data quality processes

- Centralized business glossary and data catalogue

- Collaborative workflows for data stewards

- Packaged solutions for customer, product, supplier, and other domains

Informatica is particularly strong for large, complex environments.

2. Talend Data Fabric

The Talend platform provides capabilities ranging from data integration to API services and big data management. Within this portfolio, Talend Data Quality performs critical functions like standardization, deduplication, and pattern matching.

Key Features:

- Drag and drop interface

- Machine learning for pattern discovery

- Reference data library

- Batch/real time data quality monitoring

- Role based governance workflows

Talend offers flexible deployment options including cloud and containerized runtimes.

3. Melissa

Melissa focuses specifically on address verification, contact data quality, and identity verification solutions. Their tools interface with CRM and marketing automation systems to maintain accurate customer data.

Key Features:

- Global address verification across 240+ countries

- Email address hygiene

- Phone number correction tools

- Multi layer identity confirmation

- List matching services

- Tools for GDPR and CCPA compliance

Melissa integrates seamlessly with Salesforce, Marketo, Oracle, SAP, and other client systems.

4. Trillium Software

Part of the Precisely portfolio, Trillium discovery solutions analyze data to understand completeness, validity, uniqueness, and integrity. This serves as the foundation for a full data quality lifecycle.

Key Features:

- Automated data profiling

- Data parsing and standardization

- Generalized pattern recognition

- Hierarchical data quality dashboards

- Reference data management

- Text data enrichment

Trillium’s data quality functions can deploy across cloud, on premise, real time, and big data environments.

5. Tamr

Tamr takes a unique approach applying machine learning algorithms to categorize source data and resolve inconsistencies at scale. This allows organizations to unify disparate datasets.

Key Features:

- Cloud native SaaS platform

- Machine learning pipeline for data curation

- Scalable data mastering across siloed sources

- Data change alerts and monitoring

- Role-based data stewardship model

Tamr is purpose built to handle large, complex enterprise datasets.

6. WinPure

WinPure focuses on addressing data quality through cleansing, deduplication, and enrichment functions. These prepare data for migration initiatives or analytics use cases.

Key Features:

- Intuitive visual interface

- Automated matching to remove duplicate records

- Tools for standardization and validation

- Real-time integration and processing

- Can be customized to specific data models

- On premise, cloud, or hybrid options

WinPure is noted for its rapid implementation cycles and ease of use.

7. Ataccama

The Ataccama ONE platform provides modules for data quality, data catalogue, data governance, data integration, and master data management. Its core capabilities focus on profiling, validation, matching, and monitoring.

Key Features:

- Embedded machine learning algorithms

- Configuration over customization

- Collaborative issue tracking

- Reference data library

- Audit trail tracking

Ataccama offers extensive integration support across on premise, cloud, or hybrid environments.

8. Information Builders

Information Builders maintains the iWay brand for data management software. iWay Data Quality in particular assesses data based on various validation and enrichment techniques. It then standardizes values for consistency.

Key Features:

- Browser based environment

- Real time web service integration

- Fuzzy matching algorithms

- Hierarchical profiling views

- Automated parsing and standardization

- International reference data

iWay can deploy on customer infrastructure or through the iWay Cloud service.

9. Experian

Experian focuses largely on data quality technologies to support customer data management for marketing analytics and decisioning. This includes data discovery, probabilities matching, and record resolution capabilities.

Key Features:

- Specialized data quality for customer data

- Integration APIs to CRM and databases

- Automated linking of related records

- Real time web service calls

- Cloud delivery model

- Packaged data quality metrics and reports

Experian primarily serves organizations looking to improve customer experiences.

10. Data Ladder

Data Ladder provides data quality, management, and analytics solutions tailored to ERP platforms like NetSuite, Salesforce, and Oracle. Core functions enable automated data assessment, correction, and enrichment.

Key Features:

- Specialized for ERP data models

- Automated data parsing and translation

- Embedded data validation rules

- Machine learning data matcher

- Easy to interpret dashboards

- On premise or multi cloud deployment

Data Ladder integrates seamlessly with leading ERPs and CRMs.

11. Quadient

Formerly Human Inference, Quadient offers a set of data quality solutions focused primarily on customer data. This helps organizations maintain accurate customer profiles through validation, enrichment, and matching techniques.

Key Features:

- Global postal address verification

- Email deliverability confirmation

- Phone number appends and correction

- Name matching algorithm

- Cloud delivery model

Quadient provides out of the box integrations with Salesforce, Oracle, SAP, and more.

12. Syniti

Through the Syniti Knowledge Platform, Syniti adds data quality assessment, data cleansing, and data enrichment capabilities aimed primarily at SAP migrations, consolidations, mergers & acquisitions, or analytics initiatives.

Key Features:

- Data quality functions tailored to SAP

- Analysis of data gaps and anomalies

- Automated standardization rules

- Reference data services

- Reusable data quality rules

- SaaS deployment

Syniti mainly serves large, complex SAP customer environments.

13. DataQualityPro

DataQualityPro focuses on address verification, email validation, phone append technologies, and related data quality services for organizations worldwide.

Key Features:

- Global postal address verification

- Email validation confirming deliverability

- Phone number appends including mobile

- Smart phone number recognition

- Cloud delivery model

DataQualityPro mainly serves marketing organizations looking to manage customer data.

14. Validity

Validity solutions help marketing teams maintain updated, accurate customer contact data including addresses, phone numbers and email addresses. This improves marketing campaign performance.

Key Features:

- Email verification and validation

- Identity resolution for detecting duplicates

- Interactive dashboards for tracking data health

- Flexible integration options

Validity integrates seamlessly with commonly used marketing tools.

Key Factors For Evaluation

As you evaluate data quality solutions, focus on a few key dimensions:

- User experience – Seek intuitive interfaces allowing business users to implement data quality workflows with minimal IT help.

- Deployment options – Consider solutions offering platform flexibility including on premise, multi cloud, containerization, etc.

- Embedded machine learning – Leverage platforms with built in algorithms that learn data behaviors to recommend data quality enhancements.

- Partner ecosystem – Review available pre-built connectors, pre defined test cases, pre configured dashboards, and professional service partners available for the platform.

- Infrastructure requirements – Upfront sizing analysis is essential to plan capacity for scale.

Be sure to balance feature/functionality, ease of use, flexibility, and TCO based on your organization’s specific data challenges, operating model and business objectives.

Conclusion

Implementing the right data quality solution enables confident decision making, productive employees, satisfied customers and reduced compliance risk through trusted information assets. While the volume and variety of data will continue exploding, these 14 vendors offer proven data quality tools to get ahead of information chaos. Evaluating solutions against key requirements around usability, deployment, machine learning, and infrastructure will lead to the best long term platform choice.

Striking the optimal balance between automation and governance, together with the flexibility to handle evolving regulations, will maximize your data quality program ROI. Reach out for personalized platform assessments and A/B testing services to experience these solutions first hand.

FAQs

Which data quality tool is best?

There is no one “best” data quality tool. The right solution depends on your specific requirements, infrastructure, use cases and budgets. Leaders like Informatica, Talend, Tamr and Trillium offer excellent capabilities but evaluate across many dimensions.

Does machine learning help with data quality?

Yes, many leading platforms now use machine learning algorithms to automatically discover data quality rules, parse and standardize values, match records and more. This level of automation is extremely beneficial.

Can data quality tools fix bad data?

Advanced data quality platforms use techniques like parsing, standardization, enhancement, deduplication and more to effectively fix issues in existing datasets. However, a sustainable data quality practice requires governance processes to stop the inflow of new bad data.

What does a data quality analyst do?

A data quality analyst oversees programs to monitor, measure and improve the health of information assets. Responsibilities include data profiling, implementing quality tools, documenting issues, working with stakeholders to fix problems and defining data quality standards.

How long does it take to implement data quality software?

While simple tools may only take weeks to implement, enterprise wide deployments with extensive integrations usually take 6 to 12 months for end to end rollout. Piloting solutions before wide scale integration is strongly advised.

- How to Do a Mail Merge in 2026 (Step-by-Step Guide for Any Tool) - June 2, 2026

- How to Hard Reset iPhone: A Simple Guide - June 2, 2026

- How to Block Spam Emails (And Actually Keep Them Out in 2026) - May 30, 2026